A couple of weeks ago, I had my first virtual reality experience with a dev kit of Oculus’ Rift. I can say with certainty that augmented reality is going to be a large part of our future. I’m very intrigued by the idea of augmented reality for shoppers wishing to try out apparel or accessories, the only goods that customers like to try on physically before they buy.

I have fleshed out a working proof-of-concept that is very light and efficient, so that even low-powered devices such as smartphones and smart displays can successfully present a proper AR virtual dressing room experience.

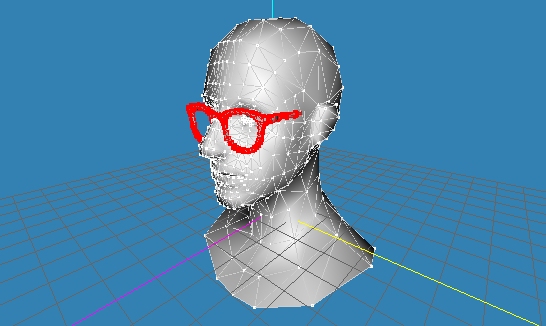

The idea is to be able to affix articles of clothing and accessories onto the user’s body in a video feed from a camera. It needs to be good enough to make the experience believable without stretching the user’s imagination. Real-time articulated full-body pose detection algorithms are a work in progress and would have to be coupled to a face tracker to get a proper usable detection, so for the sake of simplicity I have focused my efforts on the head only. I decided to choose glasses as a proof-of-concept accessory.

The project involved four stages: Tracking the face of the subject, recovering the 3D pose of the face from a 2D image, aligning a 3D model of the eyewear to ‘fit’ the head, and then projecting the 3D eyewear back onto the 2D face.

Kyle McDonald’s FaceTracker library does an excellent job of tracking the face in real-time.

For projective recovery of the pose of the face, I used this 3D model of the human head to approximately determine the points corresponding to those returned by the FaceTracker. A 2D-to-3D projective recovery transformation matrix T indicates the transformation required to map the points on the camera feed to their corresponding locations on the head, which can be determined by some linear algebra-fu. A simple 3D model of spectacles was used as the accessory (any other accessory would have worked fine), and the model was transformed once to fit the head and the file was updated, so no further transformation was required for this object.

To determine where in the video feed the glasses should be shown, the inverse transformation of T, A=T^-1 was calculated and applied to each of the non-occluded points of the glasses, determining their position on the video feed. These points were then displayed using OpenCV point objects.

All in, I was able to achieve 28 fps with this mechanism on a live feed from a webcam, with moderately accurate results. To commercialize this concept, one would need to determine the actual size of the viewer’s face so that the glasses (or other accessory) can be scaled accordingly, instead of scaling to fit. Further, a fast and lightweight rendering mechanism (or other way of properly displaying the accessory) needs to be figured out – right now I just display points. Maybe sometime down the road I’ll revisit this and make a commercial-ready augmented reality retail product, let’s see. Amazon could do with something like this, of course.

For more on what I’ve done, do check out my resume

Get in touch with me at: qasimzafar AT outlook DOT com

Leave a Reply